Payments need permission: As AI enters payments, the checkout may become the industry’s trust anchor

Key Insights

-

People are more open to AI supporting specific payment tasks than to systems making financial decisions on their behalf.

-

Loss of control, unclear consent, and invisible decision-making are the biggest worries and barriers to acceptance.

-

Nearly half of respondents believe AI-managed payments will become the norm, but remain hesitant to embrace them without clear user control.

-

Consumers are more receptive to AI when its role is visible and tied to a clear benefit such as improving security, speeding up checkout, or alerting users to issues.

-

Qualitative responses reveal strong emotional reactions. Recurring language patterns highlighted fear, distrust, and loss of control as dominant emotions associated with AI making payment decisions independently.

Don't have time to read more now? Sign up to our newsletter to get the latest insights directly in your inbox.

AI is fast moving from experimentation to real-world deployment across payments. But how comfortable are consumers with systems that can make payment decisions on their behalf?

To explore this question, we surveyed more than 3,000 adults across the US and the UK. The findings reveal clear tensions between growing expectations of AI in commerce and persistent concerns around control, consent, and visibility in payments.

We have observed these dynamics across age groups and between markets, and highlight why environments such as in-person payments, may play a vital role in how AI capabilities are introduced and orchestrated within the wider payments ecosystem.

How familiar are you with the idea of AI automatically managing payments or making payment decisions for you?

Starting off with awareness, we see how across both markets, familiarity with AI agents managing payments is moderate and not widespread. Around four in ten respondents in both the UK (44%) and the US (42%) say they are familiar with the idea, while the majority still feel unfamiliar. Importantly, only 12% in each country describe themselves as “very familiar,” indicating that most awareness is shallow rather than confident.

AI-led payments are not an entirely new concept, but for most people they remain poorly understood and abstract. People may have heard of AI managing payments, but the data does not suggest they feel able to clearly see, understand, or confidently assess how it would work in practice.”

Gender differences are present but not dramatic: men are consistently showing higher exposure than women in both markets. In the UK, familiarity is fairly balanced but women skew more towards unfamiliarity, however, in the US, the gap is clearer, with men showing higher familiarity.

Familiarity with AI‑led payment decisions, by age

Perhaps unsurprisingly, age is where the strongest patterns appear: in the UK, in particular 18 - 24s stand out as highly familiar with the concept of AI agents handling payments, in fact, over half of respondents are saying they are very familiar, while US familiarity at the same age is far lower. From 35 upwards, familiarity declines steadily in both countries, and by 65+, unfamiliarity dominates, though particularly in the UK. Overall, we see a peak in familiarity among younger respondents in both markets.

To what extent do you agree or disagree with the following statement: “I would feel comfortable letting AI automatically select the best payment option for me at checkout.”

Across both countries, discomfort outweighs comfort, but the balance differs. In the UK, only 20% are comfortable letting AI select payment options, versus 62% uncomfortable and 18% neutral. The US is less resistant but still net negative, with 32% comfortable, 50% uncomfortable, and 18% neutral. This gap seems to be driven by intensity, for instance: 35% of UK respondents strongly disagree, compared with 33% in the US, while the US has a much higher share of ‘balanced’ “agree” responses (24% vs 14%), pointing to conditional rather than enthusiastic acceptance. What this means is that:

Even when the scenario is framed narrowly around checkout rather than AI broadly “managing payments”, comfort remains limited. The hurdle is not AI itself, but the idea of delegating decision-making in the payment moment, even if only for a single transaction rather than an ongoing system managing finances more broadly.

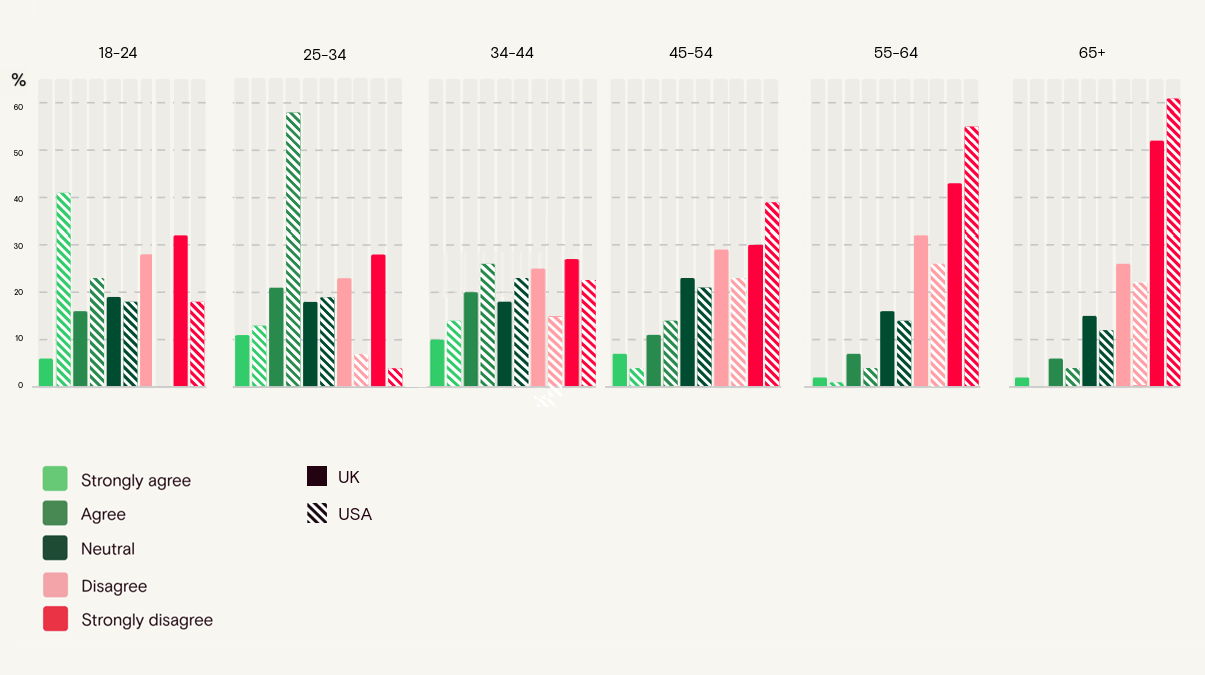

Letting AI choose the payment method: comfort by age

Age exposes the biggest difference between our two markets: in the US, comfort with AI agents is low across across every age group, even among 18 - 24. Only 22% agree, while 60% already disagree, and resistance increases further with age. The UK starts from a dramatically different place: among the 18 - 24s group an astounding 64% are comfortable, driven by 41% strongly agreeing, but this drops sharply with age, by 44 -54, disagreement overtakes, and further by respondents in their 60s, the UK reaches 84% disagreement, with the US showing a similarly high level of resistance in older groups.

There doesn’t seem to be a broad mandate for autonomous payment decision-making, even at the physical checkout and where openness does exist, it is more as conditional acceptance than as blanket trust.

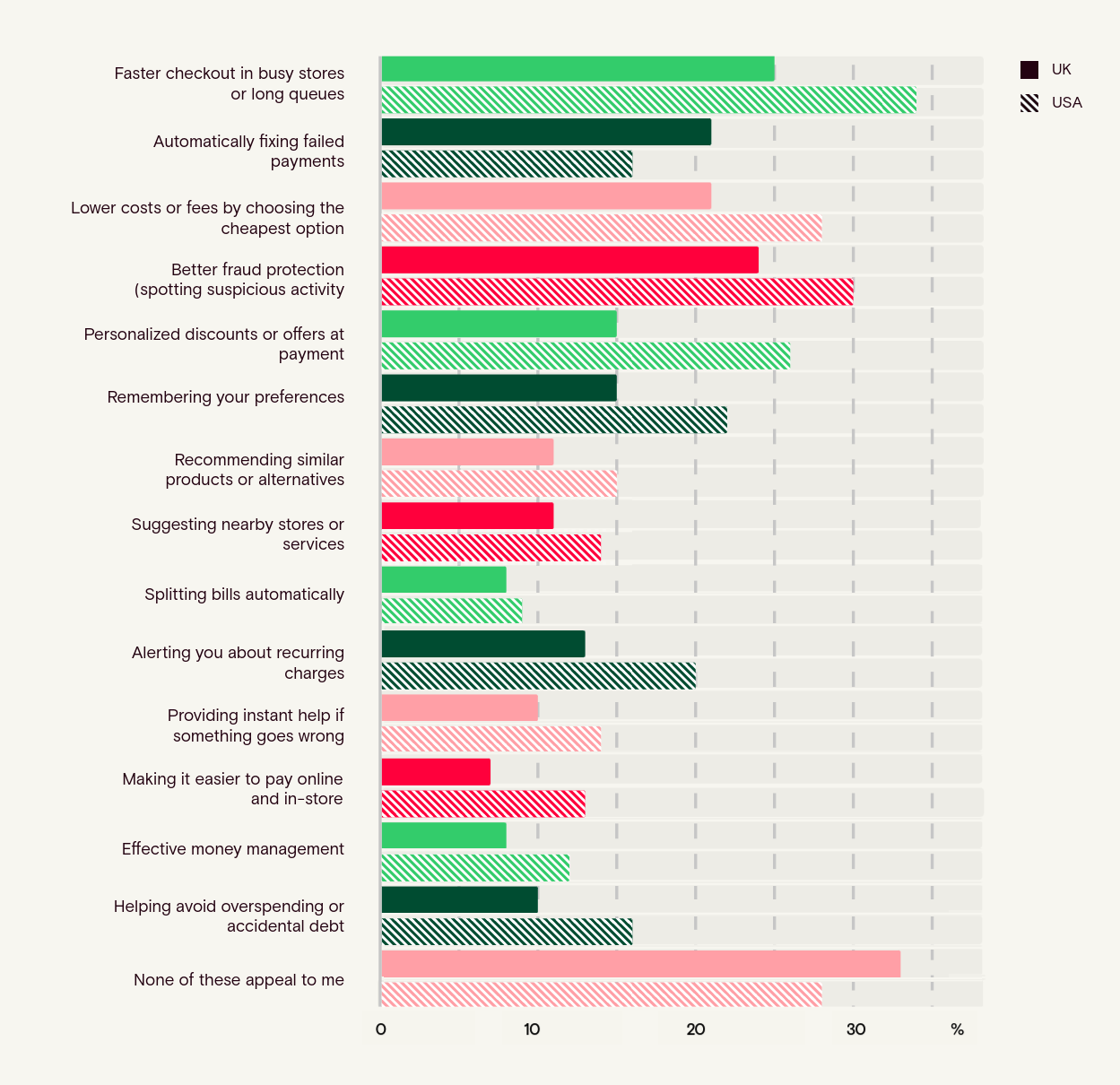

Which of the following benefits of AI-driven payments appeal to you most?

Among younger respondents, the findings show a different dynamic than in prior questions. In the 18 - 24 group, outright rejection of AI-driven payment benefits (“none of these appeal”) is markedly lower in the UK (9%) than in the US (18%), despite the UK being more comfortable with AI selecting payment options. The same reversal appears among 25 - 34s, where rejection remains low in the UK (5%) but is substantially higher in the US (19%).

Across both age groups, appeal for individual benefits such as faster checkout, lower costs, and fraud protection is broadly similar between markets.

Appeal of AI‑driven payment benefits across the US and UK

People are not rejecting every AI-related outcome in payments. They appear more open to specific, situational benefits such as speed or fraud protection, but are far less comfortable with AI taking over payment decisions itself.

From 35 - 44 onwards, attitudes in both countries start to converge into growing resistance. Interest becomes more selective and rejection accelerates, especially in the UK.

Across every age group, the UK reaches this rejection point earlier and more decisively than the US, reinforcing that the difference between markets is about baseline trust and perceived necessity, not feature preference.

We are starting to see the conditions under which AI feels more acceptable: not as an autonomous financial actor, but as something that helps with speed, fraud protection, or as an optimization tool, all within a clearly contained and defined payment moment.

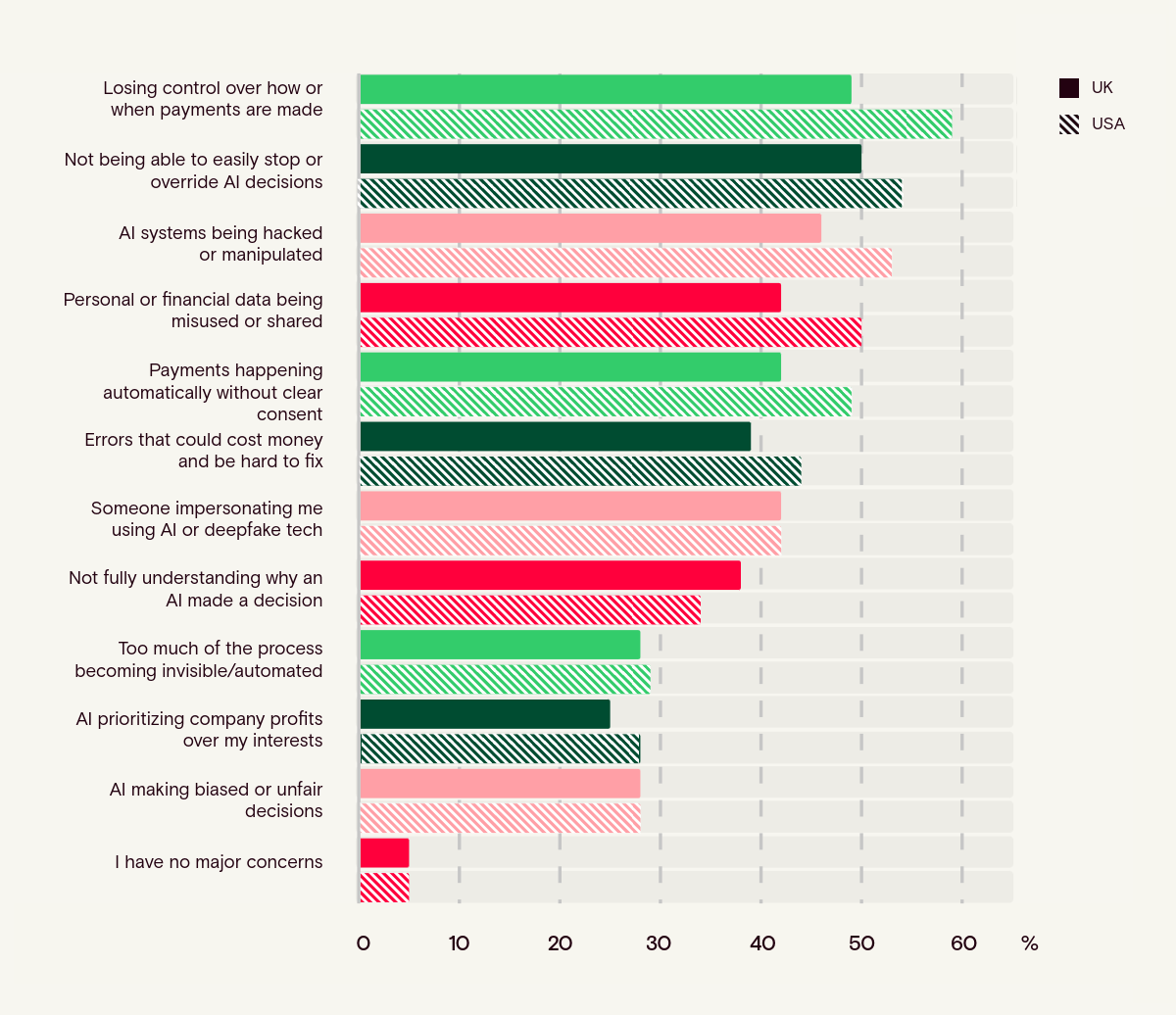

What concerns you most about AI managing or making payment decisions on your behalf?

Concern is near-universal and tightly clustered around control, consent, and security. The single biggest concern in both countries is loss of control, cited by 59% in the US and 49% in the UK, followed closely by not being able to stop or override AI decisions (54% US, 50% UK). Security-related fears are consistently higher in the US: 53% worry about AI being hacked (vs 46% UK) and 50% about data misuse (vs 42% UK). Meanwhile, concerns about payments happening without one’s clear consent are also widespread (49% US, 42% UK). Only 5% in either market say they have no major concerns, confirming that scepticism is effectively universal rather than confined to a niche group.

These results point to a clear trust ceiling for AI in payments, and it is being driven less by novelty than by perceived loss of control, weak override, and lack of assured consent.

Gender differences are present but secondary to the overall pattern. In the US, women are consistently more concerned than men across most measures while in the UK, this picture is more mixed: men are more likely than women to cite loss of control (53% vs 46%), while women slightly over-index on concerns around hacking (46% vs 45%)

Age produces the clearest gradient. Concern rises sharply with age and converges across markets. Crucially, however, is where these concerns cluster that show us a clear pattern: the most consistent anxieties centre on loss of control, inability to override AI, and payments occurring without clear consent.

This, together with the concern that “too much becomes invisible,” suggests that resistance is less about AI itself than about whether payment decisions remain visible, interruptible, and accountable. For example: losing control over payments: 73% UK / 63% US among 65+, not being able to stop or override AI: 64% UK / 59% US among 55–64s, and payments without clear consent: 64% UK / 58% US among 65+. In other words:

The results point to a gap between technical control and perceived control. Even if the underlying automation is identical, people react far more negatively when decisions ‘feel’ invisible or continuous.

This begs the question: is resistance weaker when AI’s role is narrow, visible, and easier to override? While the data does not measure this distinction directly, it strongly suggests that acceptance improves when AI operates within a clearly defined payment moment. Respondents react most negatively when AI is framed as something that “manages my payments” over time, operating continuously in the background with limited visibility or intervention points. By contrast, sentiment softens when AI is positioned as assisting within a single transaction, where actions are explicit and control remains clear.

Physical checkout is perhaps the clearest example of this moment-bound interaction. The payment is anchored to a specific event: a visible device, a deliberate customer action such as tapping, inserting, or confirming, and immediate feedback. Even when automation or AI-driven logic operates behind the scenes, the payment itself remains a contained, consent-driven moment with a clear start, a clear end, and an obvious opportunity to intervene.

In structural terms, this helps mitigate many of the trust concerns raised throughout the research.

In physical payment environments, the structure of the checkout moment helps mitigate many of the trust concerns raised throughout the research. As the findings suggest, the closer AI gets to acting independently with people’s money, the more trust breaks down around control, consent, and reversibility.

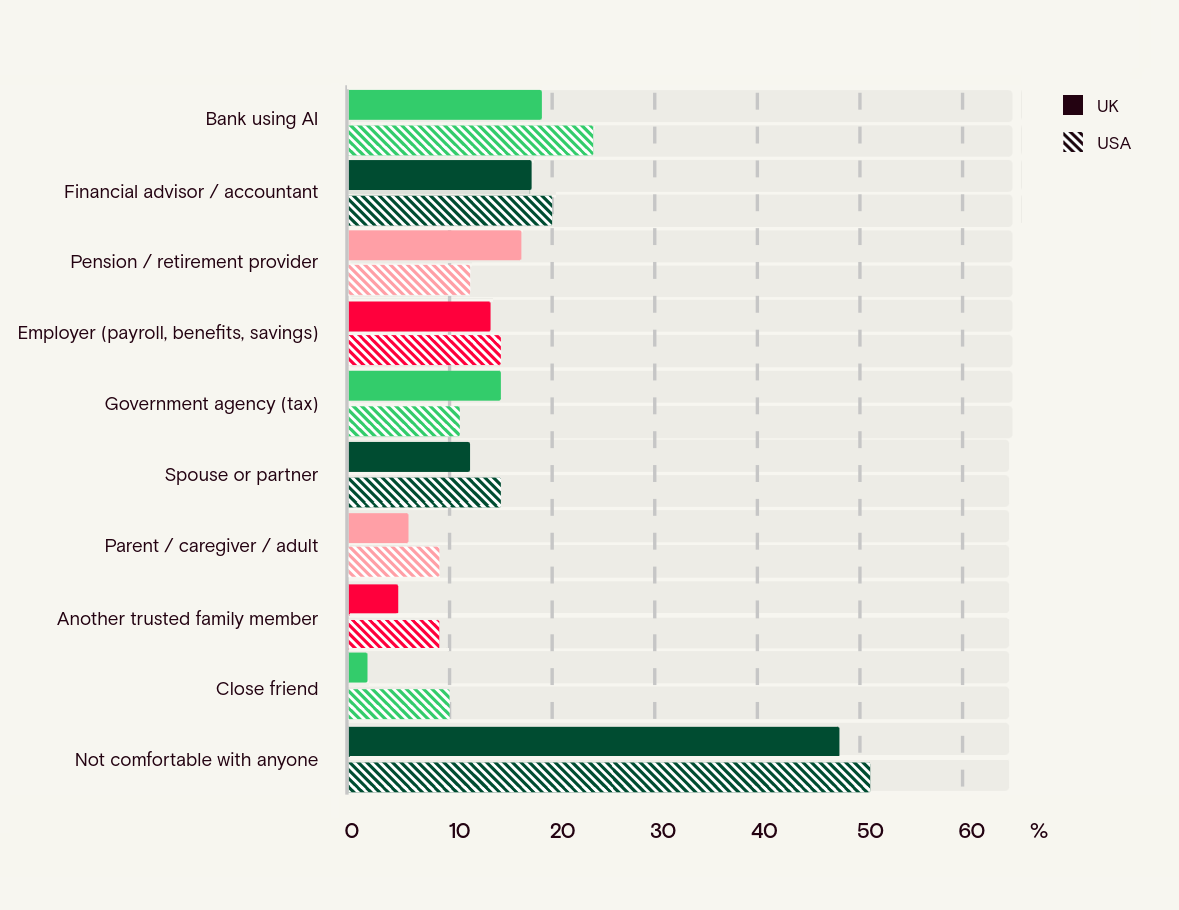

Which of the following people or organisations would you feel comfortable using AI tools or agents to manage your money on your behalf?

Across both countries, comfort with delegating money management to AI is low, and outright rejection is the dominant response. Nearly half of UK respondents (48%) and a majority in the US (51%) say they would not feel comfortable with anyone using AI to manage their money. Where openness does exist, it clusters narrowly around formal financial institutions rather than people. Banks rank highest (19% UK, 24% US), followed by financial advisors or accountants (18% UK, 20% US). Even these are minority positions, indicating conditional tolerance rather than trust.

Clearly, the findings suggest that no trust issue is solved simply by associating a familiar institution between the user and the AI.

What is especially revealing is who scores lowest. Personal relationships consistently sit at the bottom in both markets. Only 2% of UK and 6% of US respondents would trust a close friend, and just 6% UK / 9% US would trust a parent or caregiver. Even spouses or partners remain low (12% UK, 15% US). This pattern holds across age groups and strengthens with age. The message is clear: resistance is not about choosing the “right person” to use AI, but about rejecting AI delegation altogether.

Money is viewed as a domain that should remain individual and human, not mediated through either social or relational proxies. That further supports the idea that people are reacting against the transfer of agency itself, not just against particular actors.”

Age sharpens this divide further. Younger respondents are the most open, particularly to banks: 32% of UK and 29% of US 18 - 24s are comfortable with a bank using AI, compared with just 9% UK and 18% US among those 65+. Rejection rises steeply with age. By 45 - 54, a majority in both markets already say they are not comfortable with anyone (52% UK, 50% US), climbing to 83% UK and 70% US among 65+. Across all ages, the UK reaches this rejection point earlier and more decisively.

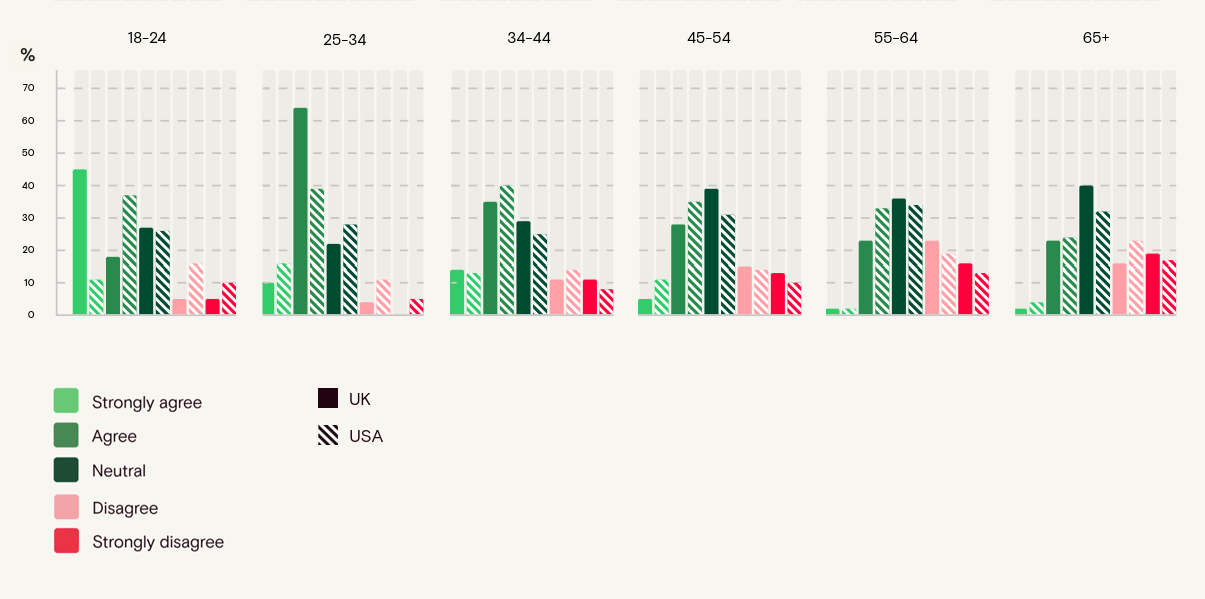

To what extent do you agree or disagree with the following statement: 'In the next decade, AI agents will largely manage everyday payments without much human involvement.'

Most of our 3,000+ respondents largely expect AI agents to manage everyday payments within the next decade, even if the idea itself leaves them uncomfortable in the present moment. In the UK, 45% agree this will happen (10% strongly), compared with 44% in the US (8% strongly), a minimal difference. Opposition is lower in both countries (26% UK, 24% US), while a sizable minority remain undecided (29% UK, 32% US). This reinforces a recurring theme across the research: belief in inevitability is clear, personal comfort is missing.

People may see AI-managed payments coming, but that should not be mistaken for consent, readiness, or trust.

Belief that AI agents will manage everyday payments in the next decade, by age

Age shows the clearest divergence, and it differs from earlier findings, in fact agreement peaks among 25 - 34s, especially in the UK (74% agree vs 55% in the US). Among 18 - 24s, the pattern is consistent with UK respondents more likely to agree than US peers (63% vs 48%), with US 18 - 24s more likely to sit in neutral territory (26%). From 45 - 54 onwards, agreement declines and disagreement rises in both markets. Among 65+, agreement drops to 25% UK and 28% US, while disagreement reaches 35% UK and 40% US.

Younger groups see AI-managed payments as more likely, while older groups increasingly doubt / resist the outcome.

This tension carries important commercial implications for payment providers: many consumers expect these technologies to arrive soon, yet emotionally they remain hesitant to accept or embrace them.”

Respondents in their own words:

The open responses provide important qualitative context to the survey findings, capturing the instinctive language people use when reacting to the idea of AI making payment decisions. Across more than 3,000 responses, the overwhelming use of phatic and emotionally charged language reveals how strongly respondents feel about control, trust, and autonomy in payments.

The word cloud below highlights the most frequently recurring terms across those responses.

United Kingdom

“I just don't trust AI to do it instead of myself.”

“Awful idea ,people will lose control of own money and privacy”

“I prefer to have the choice myself as I’d feel more in control.”

“Horror. It would be a complete loss of control over my finances.”

“I want to make my own financial decisions and wouldn't trust anything else to make these decisions”

United States

“I'm not interested in giving up that control to a computer.”

“It's uncomfortable because I feel like I'd have no control.”

“I am very independent and don’t want anyone making decisions for me, much less a computer.”

“I hate to think about it because I would lose control of too many aspects of how my money is being handled”

“Absolutely not. I want to control what I pay and how.”

Across both the US and the UK, reactions to AI making payment decisions are overwhelmingly negative. In the US, around 80 - 85% of responses echo a negative sentiment (horror, fear, loss of control), in the UK this is slightly lower, at roughly 70 - 75%, (though this may be due to a different communicative pattern or use of emphatic language), but still dominant.

We are not talking about mild scepticism, but attitudes of active resistance. Respondents show to consistently react with fear, distrust, and with strong emotional language. The idea of handing payment authority to AI triggers concerns that go way beyond technology savviness or readiness.

What the wider dataset suggests is that this rejection is not simply about AI being present in payments, but about AI acting in ways that feel invisible, autonomous, and difficult to stop.

Trust, control, and why people object:

The core issue in both markets is not around AI capabilities, but around loss of control. Respondents repeatedly stress the ingrained need to approve, override, or stop decisions. Trust here breaks down at multiple levels, and chiefly:

- Loss of control over how or when payments happen

- Difficulty stopping, overriding, or correcting AI-made decisions

- Fear of errors, misuse, hacking, or consent being bypassed

US respondents more often frame this as a personal autonomy issue, a sentiment that could be summarised as: “no one decides for me”, whereas UK respondents tend to emphasise visibility and awareness along the lines of “I need to see and understand what’s happening”.

Despite the mild tonal difference, both samples in the end reach the same conclusion: AI may assist, but it must not decide independently.

Who is more open, and under what conditions:

Positive sentiment exists here and there, but is limited and conditional, roughly 10 - 15% in the US and 15 - 20% in the UK. Where people's sentiment echoes ‘openness’ we see a focus on convenience, speed, reminders, or optimization, but almost in every instance this is mediated by conditions around the need for transparency, security, and human control.

Younger adults (25 - 44) are the most pragmatically open if safeguards are explicit, whereas older groups are immovably resistant. Here, negative sentiments and opposition are near absolute.

Gender differences appeared to be secondary: women more often cite security, safety and / or errors whereas men more often cite a loss of autonomy, but the common thread remains a need to have full control over money.

Taken together with the earlier questions, this suggests respondents are more likely to tolerate bounded assistance than open-ended delegation. That creates a more realistic entry point for AI in payments: not as a substitute for the payment moment, but as support around it.”

Conclusions:

This research surfaces a clear pattern. People are resisting systems that make financial decisions on their behalf. What respondents appear more willing to accept is bounded assistance within a clearly defined payment moment, rather than ongoing delegation to autonomous systems.

In physical payment environments, that moment is structurally anchored: a visible terminal, a deliberate customer action such as tapping, inserting, or confirming, a clear start and end to the transaction, and immediate feedback that the payment has been authorised. These cues make the interaction visible, interruptible, and easy to override, reinforcing a sense of agency even when automation operates behind the scenes.

To interpret what these findings mean for the future of payments, Aevi CEO Mike Camerling highlights a key distinction emerging from the research.

“Agentic commerce isn’t being rejected because it uses AI. It’s being rejected because it removes visibility, perceived control, and a clear moment of consent from payments. When people don’t understand what a system is doing or why a decision was made, trust erodes very quickly.”

This distinction appears repeatedly throughout the findings. Respondents recognise potential benefits such as faster checkout, fraud protection, and cost optimization. However, trust breaks down when AI begins to operate invisibly, continuously, or without clear consent.

“In-person payments already solve many of the trust challenges around AI. There is a human interaction, a visible device, and a deliberate action to complete the transaction. AI’s role is not to replace that moment, but to enhance it by helping select the right offers, loyalty benefits, or payment methods at the point of sale.”

Mike Camerling, CEO, Aevi

Worries and fears are tightly clustered around control, consent, and security. Loss of control over payments, the inability to override decisions, and fears around hacking or misuse of financial data dominate responses in both the UK and the US. Only around 5% of respondents report having no major concerns.

At the same time, consumers expect AI-managed payments to become part of their everyday payment interactions in the coming decade. Younger respondents in particular see this as extremely likely, even if they remain cautious about the idea today. This highlights an important tension for the industry as a whole: just like with other payment innovations such as crypto payments and biometrics, the expectation of adoption is running ahead of emotional acceptance.

For payment service providers, fintech platforms, and merchants, this gap has clear commercial implications. AI capabilities may be the next frontier, but consumer trust will depend heavily on how those capabilities are introduced.

The research suggests that acceptance is most likely where AI remains visible, bounded, and easy to override. Physical payment environments naturally provide many of these characteristics: a clear start and end to the interaction, a visible interface, and an explicit customer action.

As a result, the near-term future of AI in payments may not be fully autonomous commerce, but AI-assisted payment moments. Systems that optimize offers, recommend payment methods, detect fraud, or streamline checkout, while leaving the final decision with the customer, align far more closely with current consumer expectations.

Methodology:

This report draws on a survey of more than 3,000 adults across the United Kingdom and the United States, exploring attitudes towards AI-driven and agentic commerce in payments. The sample was balanced across key demographics, including age and gender, to ensure broad representativeness across both markets.

Alongside the quantitative analysis, the study also incorporated open-ended responses from participants. Nearly 3,000 qualitative comments were examined to identify recurring themes, including the use of phatic and emotionally expressive language around trust, control, and autonomy in payments.

Interested in reading more around this subject? Here are some useful articles…